This function implements additional training iterations for a DGP emulator.

Arguments

- object

an instance of the

dgpclass.- N

additional number of iterations to train the DGP emulator. If set to

NULL, the number of iterations is set to500if the DGP emulator was constructed without the Vecchia approximation, and is set to200if Vecchia approximation was used. Defaults toNULL.- cores

the number of processes to be used to optimize GP components (in the same layer) at each M-step of the training. If set to

NULL, the number of processes is set to(max physical cores available - 1)if the DGP emulator was constructed without the Vecchia approximation. Otherwise, the number of processes is set tomax physical cores available %/% 2. Only use multiple processes when there is a large number of GP components in different layers and optimization of GP components is computationally expensive. Defaults to1.- ess_burn

number of burnin steps for ESS-within-Gibbs at each I-step of the training. Defaults to

10.- verb

a bool indicating if a progress bar will be printed during training. Defaults to

TRUE.- burnin

the number of training iterations to be discarded for point estimates calculation. Must be smaller than the overall training iterations so-far implemented. If this is not specified, only the last 25% of iterations are used. This overrides the value of

burninset indgp(). Defaults toNULL.- B

the number of imputations to produce predictions. Increase the value to account for more imputation uncertainty. This overrides the value of

Bset indgp()ifBis notNULL. Defaults toNULL.

Details

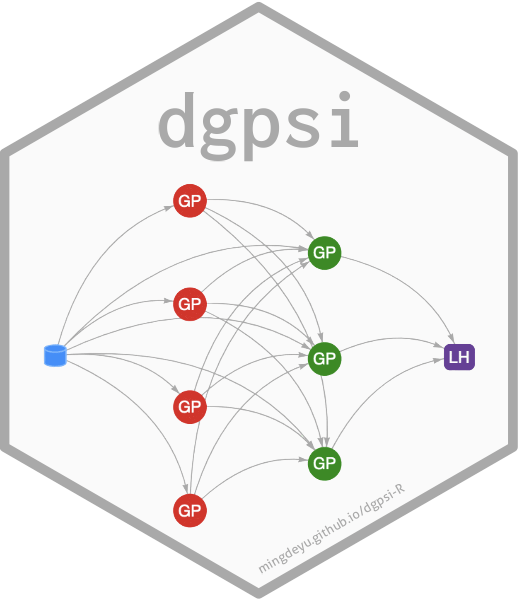

See further examples and tutorials at https://mingdeyu.github.io/dgpsi-R/dev/.

Note

One can also use this function to fit an untrained DGP emulator constructed by

dgp()withtraining = FALSE.The following slots:

looandooscreated byvalidate(); andresultscreated bypredict()inobjectwill be removed and not contained in the returned object.